The "Dual-Write" Trap: Why Your Microservices Need the Outbox Pattern

You saved the data, but did the event publish? How to guarantee consistency in .NET distributed systems.

The Silent Killer of Consistency

Hey dev! Let’s talk about a scenario that has kept many of us up at night.

You are designing a .NET microservice. Your logic seems solid:

- Register a new

Orderin the database (SQL Server/PostgreSQL). - Publish an

OrderCreatedevent to the message broker (RabbitMQ/Azure Service Bus) so the Shipping Service can do its job.

You write the code, wrap the database save in a transaction, and right after SaveChanges(), you call bus.Publish(). It works on your machine. It works in QA.

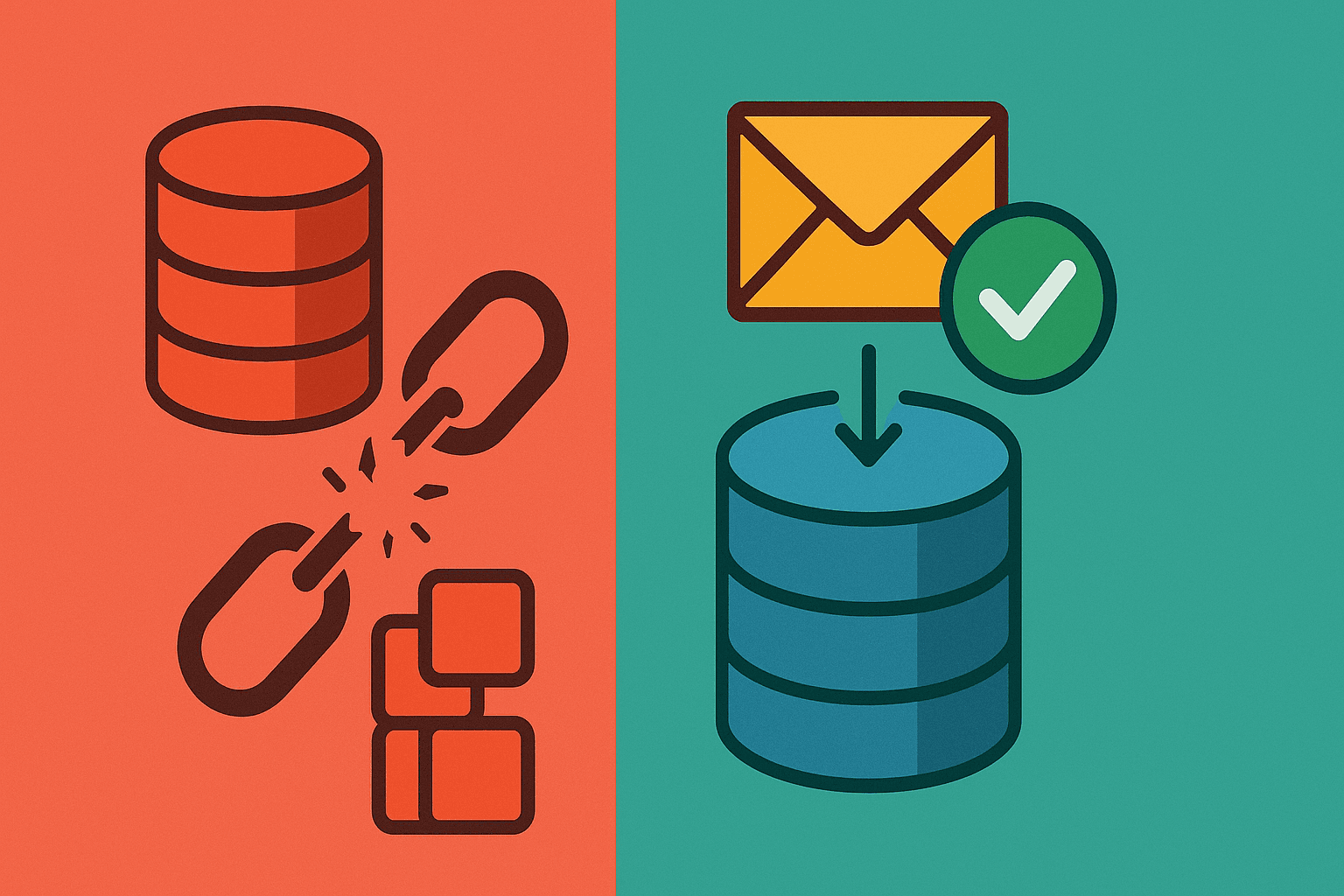

But in production, the network blips. The database transaction commits successfully, but the message broker is unreachable for just 500ms.

Result: You have an order in the database, but the Shipping Service never got the memo. Your system is now inconsistent, and you have a "zombie order" that will never be shipped.

This is the Dual-Write Problem. You are trying to write to two different reliable systems (DB and Broker) without a distributed transaction (2PC).

Enter the Outbox Pattern

The solution isn't to retry endlessly inside your HTTP request (that kills performance). The solution is to make the "sending of the message" part of the "saving of the data."

This is where the Transactional Outbox Pattern comes in.

Instead of publishing directly to the broker, you save the message payload to a specific table (the "Outbox") in your database inside the same transaction as your business data.

- Start Transaction.

- Save Order.

- Save

OrderCreatedmessage toOutboxtable. - Commit Transaction.

Now, the operation is atomic. If the DB save fails, the message isn't saved. If it succeeds, the message is guaranteed to be there. A separate background process then picks up the message from the table and reliably pushes it to the broker.

Doing It in .NET (The Smart Way)

You could implement this mechanism from scratch—polling, locking, retries—but that violates the DRY (Don't Repeat Yourself) principle we discussed in our previous article. We don't want to maintain infrastructure code if we don't have to.

In the .NET ecosystem, MassTransit handles this elegantly. It integrates directly with Entity Framework Core, effectively making the Outbox pattern a configuration detail rather than a coding burden.

Configuration Example

Here is how you set it up in Program.cs:

services.AddMassTransit(x =>

{

x.AddEntityFrameworkOutbox<OrderDbContext>(o =>

{

// The bus will check for messages in the DB every 10s (configurable)

o.QueryDelay = TimeSpan.FromSeconds(10);

// Ensure messages are sent to the broker even if the bus is down initially

o.UsePostgres();

o.UseBusOutbox();

});

x.UsingRabbitMq((context, cfg) =>

{

cfg.ConfigureEndpoints(context);

});

});

And in your application code? You change nothing.

// The 'Publish' call here doesn't hit RabbitMQ immediately.

// It writes to the DbContext change tracker (Outbox table).

await _publishEndpoint.Publish(new OrderCreated(order.Id));

// The atomic commit happens here. Data + Message saved together.

// Simplicity is key!

await _dbContext.SaveChangesAsync();

Why This Matters

This isn't just about "clean architecture." It's about operational resilience.

By using the Outbox pattern, you decouple your service's availability from the message broker's availability. If RabbitMQ is down, your service can still accept orders. The messages will just sit safely in the Outbox table until the connection is restored.

It turns a distributed consistency nightmare into a reliable, self-healing mechanism.

Final Thoughts

As we move from monoliths to microservices—like the transition from REST to gRPC we explored recently—we trade simple function calls for complex network interactions.

We must ensure our systems are robust enough to handle these complexities without keeping us awake at night. Tools like MassTransit in .NET encapsulate this complexity so you can focus on what matters: the business logic.

What about you? Have you ever dealt with "zombie records" where data exists but downstream services don't know about it? How did you solve it?

👉 Connect with me on LinkedIn for more insights on .NET, Cloud, and Architecture.